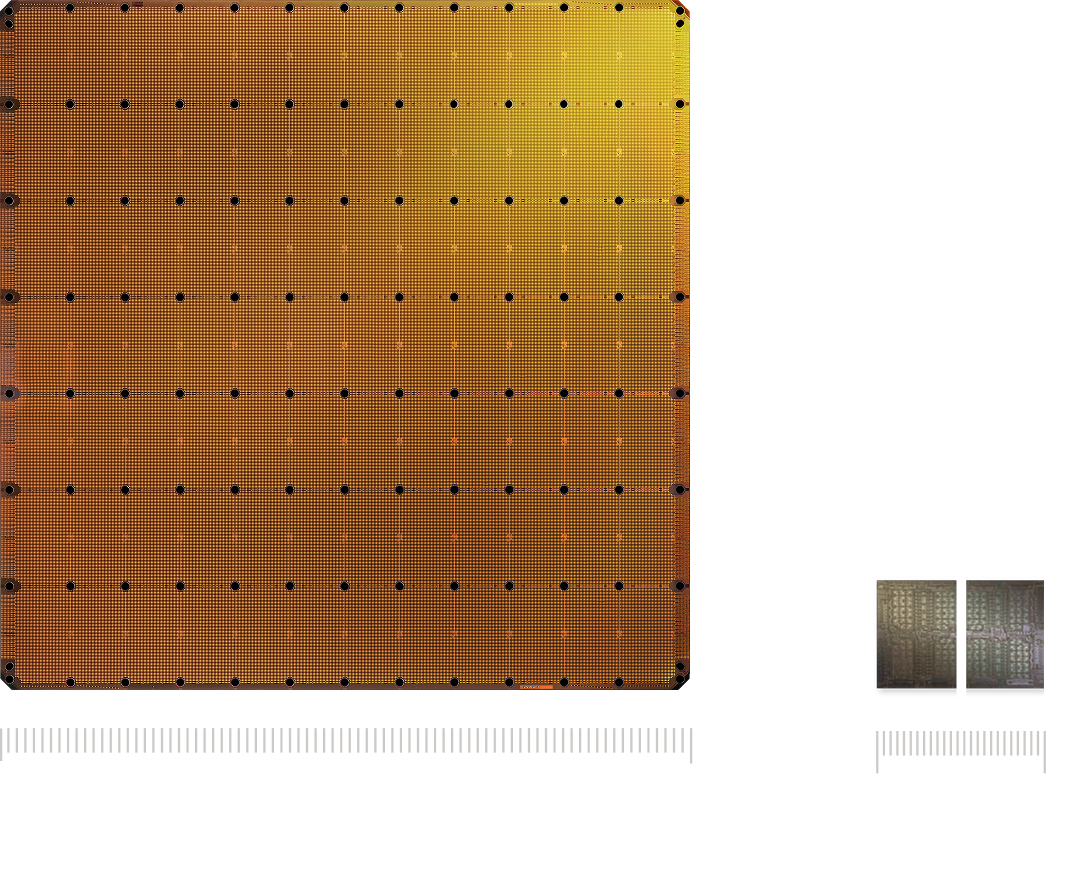

The Future of AI is Wafer Scale

Four trillion transistors. 125 petaflops. One silicon wafer. The world’s largest and most powerful processor for AI training and inference.

Four trillion transistors. 125 petaflops. One silicon wafer. The world’s largest and most powerful processor for AI training and inference.

Get Updates

Performance comparisons are based on third-party benchmarking or internal testing. Observed inference speed improvements versus GPU-based systems may vary depending on workload, configuration, date and models being tested.