Alphasense

Deeper Research,

in a Fraction of the Time

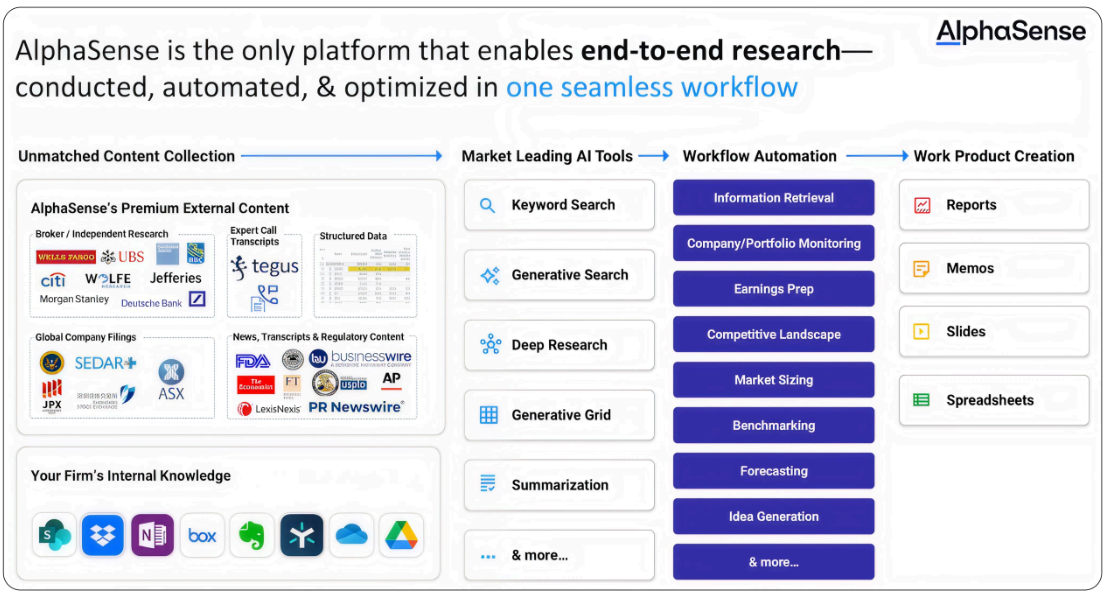

AlphaSense - the end-to-end market intelligence and research platform trusted

by 6,500+ enterprises — partnered with Cerebras to accelerate the Generative

Search architecture behind its research workflow. With Cerebras Inference,

AlphaSense can run more searches, analyze more documents, and complete

more tool-enabled tasks with lower latency, helping deliver deeper, fully cited

insights faster